- Tesla kicked off its autonomy day on Monday morning at its Palo Alto headquarters.

- CEO Elon Musk, along with director of AI Andrej Karpathy and head of hardware engineering Pete Bannon showed off the latest in Tesla’s self-driving technology.

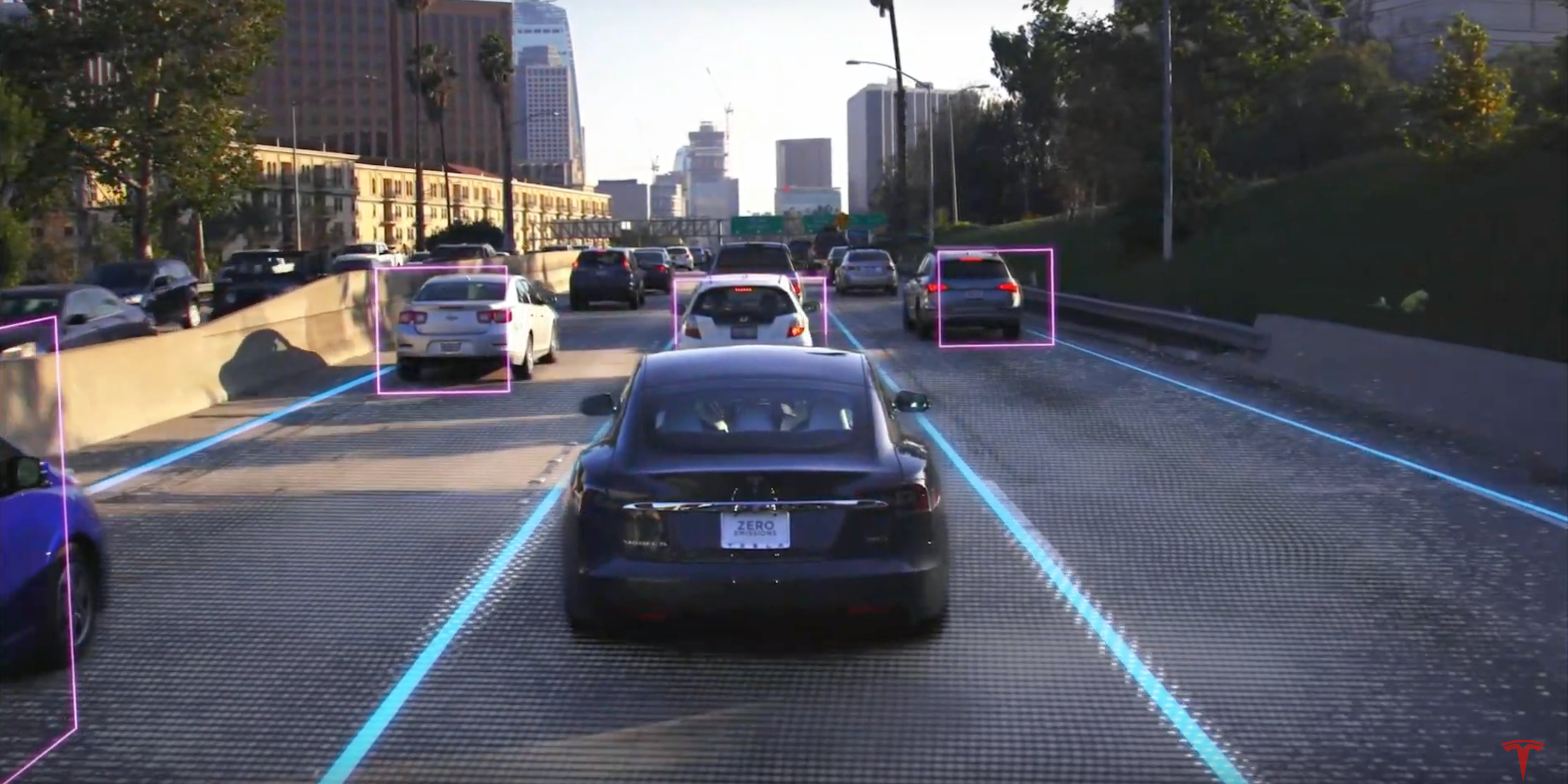

- The company once again shunned Lidar, the radar technology that most other competitors are using for autonomous driving, in favor of camera imagery.

- Some experts have warned that Musk’s previous comments about Tesla’s self-driving software could be unsafe and even unethical.

Tesla’s “autonomy day” kicked off on Monday morning at the electric-vehicle maker’s headquarters in Palo Alto, California, where executives including CEO Elon Musk were expected to give investors more details about the company’s self-driving technology, known as Autopilot.

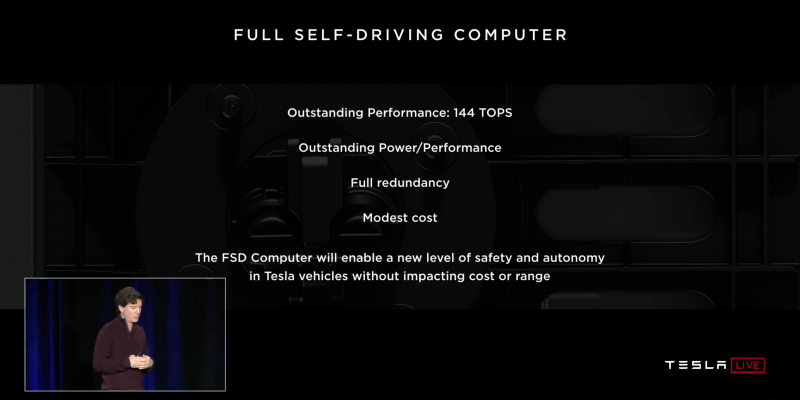

“Tesla is making significant progress in the development of its autonomous driving software and hardware, including our FSD computer, which is currently in production and which will enable full-self driving via future over-the-air software updates,” the company said when it announced the event.

Attendees were given red, Tesla-branded badges with sequential numbers, assumably for test rides of the full self-driving functionality.

Full self-driving demo ride #4!#TeslaAutonomyDay pic.twitter.com/3SMIkPklYM

— Matt Joyce (@matty_mogul) April 22, 2019

Musk took the stage just before noon alongside Pete Bannon, the vice president of Autopilot engineering, as more than 40,000 people watched remotely via the company's live YouTube stream.

Bannon explained how Tesla designed a new chip for its Autopilot software, noting that the company was able to leverage expertise from multiple teams across the business.

"I really love it when a solution is boiled down to its base elements," Bannon said, referring to the chip architecture. "You have video, computing, and power. It's straightforward and simple."

At the core of all of this is safety, Musk added.

"Any part of this could fail and the car will keep driving," Musk said. "The probability of this computer failing is substantially lower than someone losing consciousness."

The computer is able to authenticate that any code it runs has been authenticated by Tesla as genuine, Bannon said.

Neural network accelerators on Tesla's new chip can process 2100 frames per second of incoming imagery from a cars eight constantly running cameras, Bannon said. That's the equivalent of 2.5 billion pixels per second.

"This is far more detailed than most people would appreciate," Musk said after Bannon finished his presentation. "At first it seems improbable. How could it be that Tesla, who has never designed a chip before, would design the best chip in the world? But that is objectively what has occurred."

But that statement was met with pushback from one competing chip company that Bannon and Musk specifically talked about on stage: Nvidia.

A slide during Tesla's presentation compared the processing capabilities of Tesla's chip compared to Nvidia's Drive Xavier chip, saying that Tesla's chip was capable of 144 TOPS of processing vs. the Drive Xavier's 21 TOPS of processing. But Nvidia says there's two issues with this comparison: First, it says its Drive Xavier chip is actually capable of 30 TOPs of processing, not 21. But more importantly, Nvidia says a better comparison would be Tesla's full self-driving computer chip vs. Nvidia's Drive AGX Pegasus, which it says is capable of 320 TOPS of processing.

"Tesla was inaccurate in comparing its Full Self Driving computer at 144 TOPS of processing with Nvidia Drive Xavier at 21 TOPS," Nvidia told MarketWatch in a statement. "The correct comparison would have been against Nvidia's full self-driving computer, Nvidia Drive AGX Pegasus, which delivers 320 TOPS for AI perception, localization and path planning."

Musk said that all Tesla vehicles produced right now already have the full self-driving chip in place.

The company also once again distanced itself from the LIDAR (light detection and ranging) radar technology that the rest of the industry is using to power self-driving cars.

"Lidar is a fool's errand," Musk said. "Anyone relying on lidar is doomed. Expensive sensors that are unnecessary. It's like having a whole bunch of expensive appendices. Like, one appendix is bad - well, how about a whole bunch of them? That's ridiculous. You'll see."

Artificial intelligence

Andrej Karpathy, Tesla's senior director of artificial intelligence, took the stage about 12:25 p.m.

"Pete told you all about the chip we've designed that runs the neural networks in the car - my team is responsible for the training of these neural networks, and that includes all of the data collection from the fleet, neural network training, and then some of the deployment onto that chip," he said.

It's up to Tesla's AI software to monitor all of the incoming flood of data from the cars and then make driving decisions based on those inputs. This can be everything from lane lines, to road signs, stop lights, pedestrians, and more. It's basically the same function the human brain does every day, in turning light signals into known objects with tons of pattern recognition.

To train a neural network, the computer requires thousands of examples to be fed into its system, Karpathy explained: "There is no substitute for real data."

The system can then be trained based on data collected by cars in Tesla's fleet already on the road, Karpathy said. As drivers cover new roads, that data can be loaded into the AI system to further train Autopilot to be a better driver through what Tesla's calling path prediction.

Autopilot software updates

Stuart Bowers, Vice President of Engineering, laid out Tesla's software testing program.

He said a new feature, like automatic lane changing, will first be released in "shadow mode."

Bowers said when "you feel good about it," the feature will go out to a controlled deployment and sent out to thousands of people. The more people using the new feature, the more data Tesla has to understand how it is working.

The company will then do a full rollout when it feels fully confident in the feature.

Tesla says it is now seeing 100,000 auto lane changes every day, and that has occurred with zero accidents.

Robotaxis

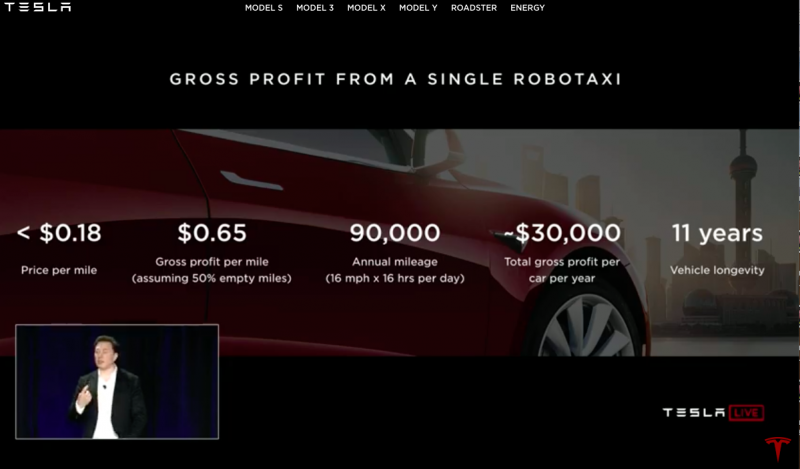

By next year Musk said to expect the first operating robottaxi's, with no one in them to start.

Musk said he's "very confident" in predicting the launch of the robotaxi program by next year, not in all regions (because of regulations) but some.

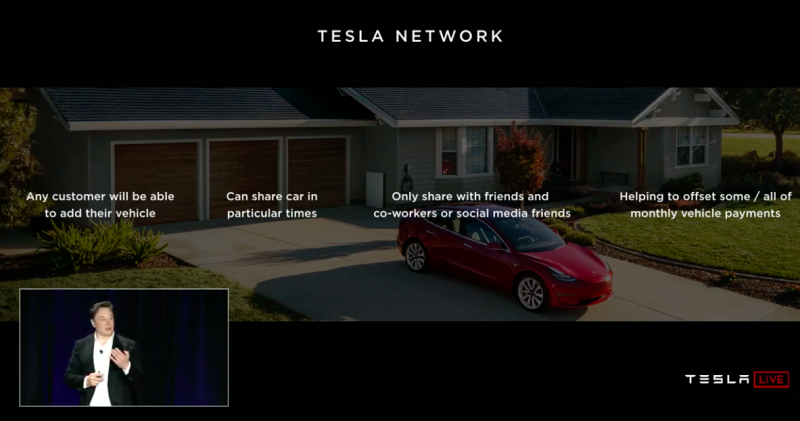

Musk says that any customer will be able to add or remove their car to the "Tesla Network," which he describes as a combination between the Uber or Airbnb. Similar to those platforms, Tesla will also take around 25-30% of revenues generated from the ride shares.

In places where not enough people are sharing their cars, there will be Tesla-owned vehicles to handle the demand.

Tesla will have its own ride-hailing app for customers to summon a ride or for them to commit their cars to the fleet.

Model 3's and Model S's will be used as taxis, Musk says.

The impact they could have on the ride-sharing industry could be massive. As Musk pointed out, the average cost of ride-share today per mile is around $2-$3. Tesla estimates a robotaxi will cost riders less than $0.18.

A driver could profit $30,000 per year from owning a robotaxi and allowing it to operate on the Tesla Network. The cars, he said, are designed to run at least a million miles.

By the middle of next year, Musk says over a million Tesla cars will be on the road with full-self driving hardware, which means, there's the potential of one million robotaxis by then.

"The fleet wakes up with a middle of the year with an update," Musk said. "That's all it takes."

Robotaxis will automatically be able to park and automatically plug in, Musk said. So no human assistance will be needed.

Regarding questions around competition, Musk reminded the audience that due to a clause in its buyer agreement, self-driving Teslas are only allowed to operate on a Tesla-run network. That means autonomous Tesla's can not be used on other ride-sharing services like Lyft or Uber.

"The fundamental message that consumers should be taking today is that it is financially insane to buy anything other than a Tesla," Musk said. "It will be like owning a horse in three years. I mean, fine if you want to own a horse. But you should go into it with that expectation."

Wall Street wary of new announcements

Wall Street analyst have said there are more pressing issues Tesla investors should be worrying about ahead of the company's first-quarter earnings report on Wednesday.

"While we firmly believe in the long term vision for Tesla and expect self driving autonomous technology will be a linchpin of the company's success," Daniel Ives, an analyst at Wedbush, said in a note to clients Monday.

"The Street needs to have a better grasp on the near term demand trajectory in the US for 2Q, delivery logistics for Model 3 in Europe/ China which had been a key culprit for the 1Q debacle, and better understanding of the tenuous balance sheet situation for Musk & Co. going forward for the stock to stabilize."

Arndt Ellinghorst, an analyst at Evercore, said he was waiting for specifics before assigning any value to Tesla's self-driving suite.

"We're certain the market is going to get a lot of promises at the investor day tonight, but we'd like to see more proof before assigning a significant value," he said in a note to clients downgrading shares of Tesla from "in line" to "underperforming."

"The market is assigning very little value to autonomous assets within public OEM's (even when they have direct valuations like GM/Cruise) and the market is not going to give Tesla credit for Musk's promises without very near term KPI's."

Musk's previous comments about Tesla's self-driving capabilities have drawn criticism from some industry experts, who say the billionaire has overhyped certain technologies in a way that could even be unethical.